[PYTHON] I tried to execute SQL from the local environment using Looker SDK

What i did

--Send SQL from your local environment using Looker API and receive the result

background

――It's a little tedious to download csv each time you analyze data, so I want to erase it before work is born. --If you have an SDK, you may be able to enjoy it even more.

What to prepare

- Python

- LookerSDK

Preparation

Looker Api SDK Official description https://docs.looker.com/reference/api-and-integration/api-sdk

GitHub https://github.com/looker-open-source/sdk-codegen/tree/master/python

Install for the time being

pip install looker_sdk

Authentication

--Click here to get API authentication key link --Click here for the types of environment variables link

###Environment variable settings

os.environ['LOOKERSDK_API_VERSION']='3.1'

os.environ['LOOKERSDK_BASE_URL']='https://xxxxxxxx.looker.com:19999'

os.environ['LOOKERSDK_CLIENT_ID']='xxxxxxxxxxxxxxxxxxxx'

os.environ['LOOKERSDK_CLIENT_SECRET']='xxxxxxxxxxxxxxxxxxxxxxxx'

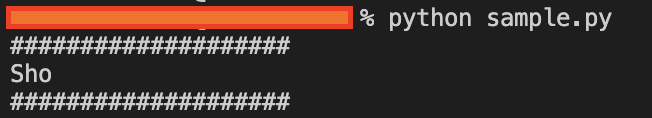

###Authentication

sdk = looker_sdk.init31()

my_user = sdk.me()

print('####################')

print(my_user["first_name"])

print('####################')

There seems to be a way to read the ini file, but

did it! It seems that the SDK will read the authentication information from the environment variables.

Run SQL from local via Looker and get the result!

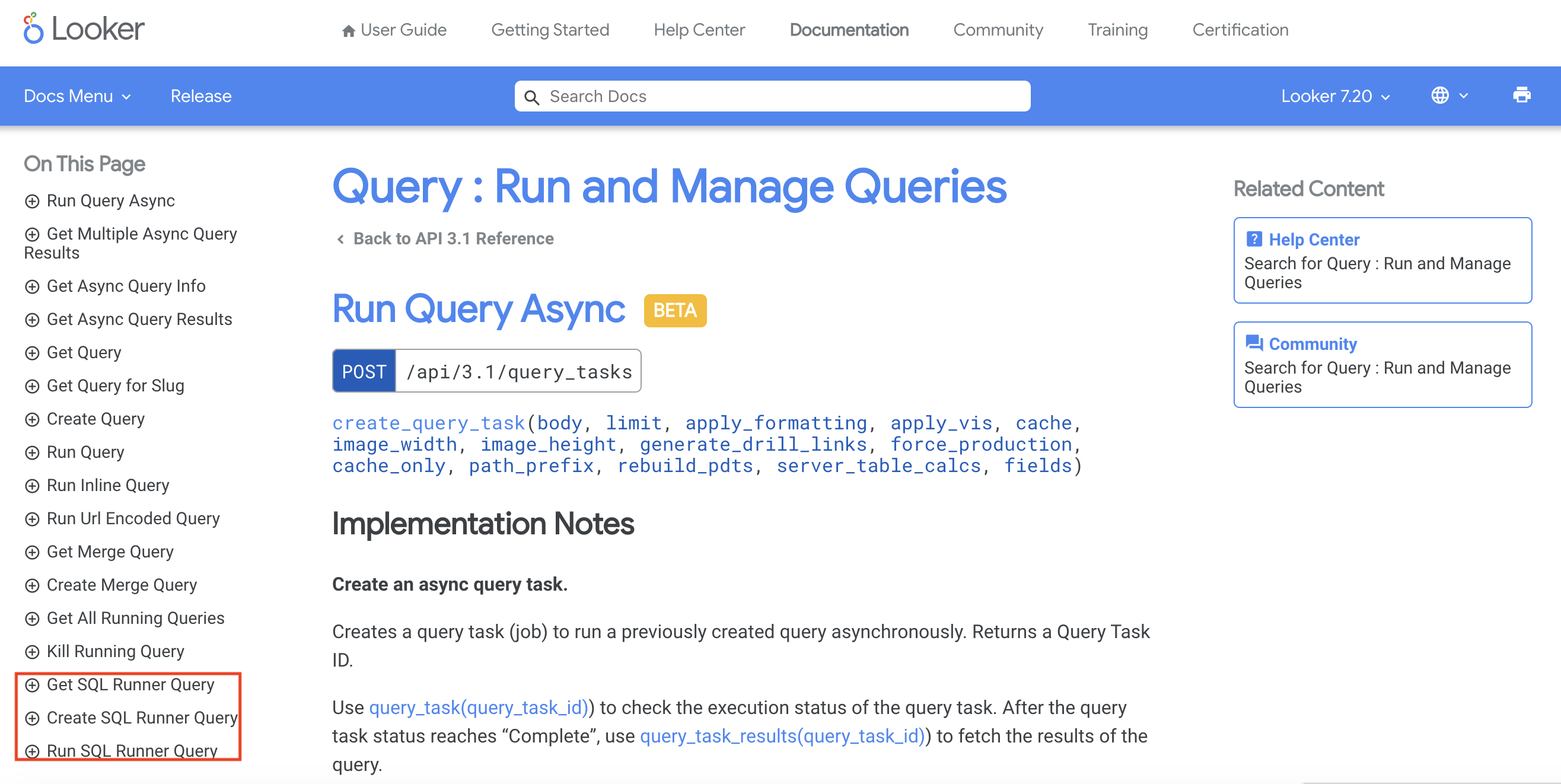

Where can I find out? .. ..

Let's take a look at the reference for the time being. .. .. .. When

Oh oh oh! ?? There is something called SQL Runner in the API reference. It's like that!

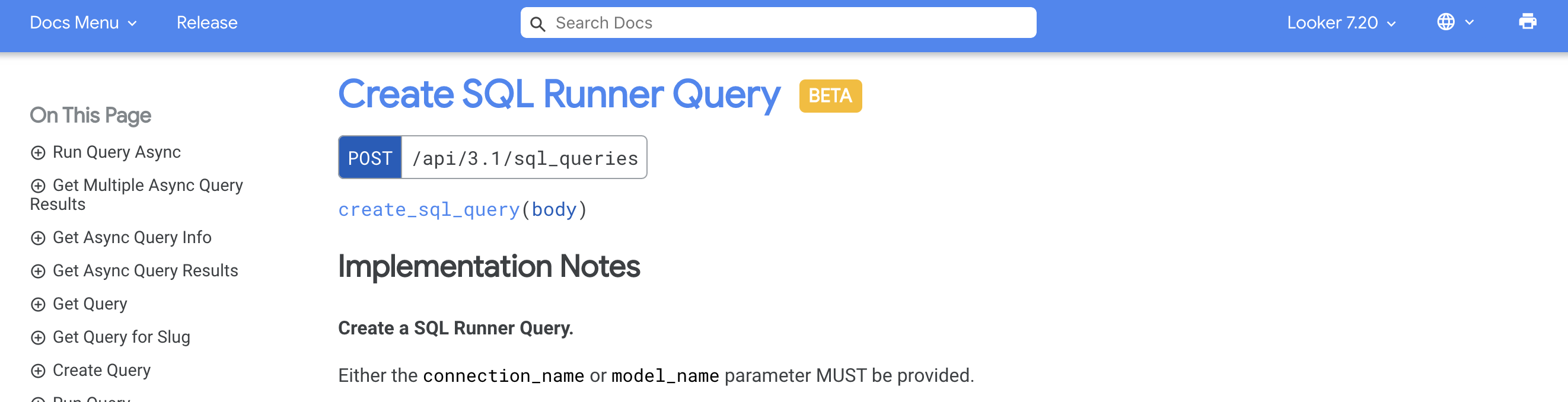

Apparently it looks like the BETA version. (As of December 23, 2020) If it changes, it has changed, so for the time being GoGo !!

Well how to go

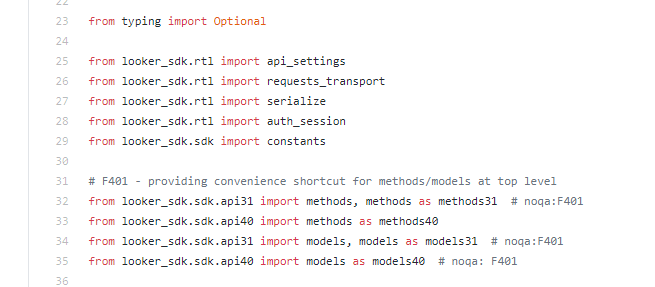

Probably a usable module looks like this

Link

Link

--There is no sample code, so it's a bit painful. --Looking at the reference, it seems that the operation of the corresponding part is as follows --Register query --Get query --Execute query --Probably it seems to be a mechanism to queue and execute. You can see the queue below - https://xxxx.looker.com/admin/queries

--As far as I can find it on the net, it seems that sdk will bring the method corresponding to the API URL. --This is in the reference. ――What should I do with the arguments? --Like creating with SqlQueryCreate - SqlQueryCreate - model_name ――I'm not sure. However, when I put the model string, it started to work. - sql --Explanation omitted

Query registration

from looker_sdk import methods31, models31

#Create API arguments

SQL = "select * from xxxxxxx limit 10"

query = models31.SqlQueryCreate( model_name="xxxxxxxx",sql="select * from public.xxxxx limit 10")

#Execute query

result = sdk.create_sql_query(body=query).slug

Apparently, there is a module in looker_sdk that corresponds to the API version. This time it is 3.1, so select the one that corresponds to it

Executing a query

sdk.run_sql_query(result,"csv",True)

- run_sql_query --First argument (required) --The query string that can be obtained when the query is registered --Specify the query to execute --Second argument (required) --Specify the output file format --It seems that you can output not only CSV but also json and txt. --Third argument (optional) --Specify whether to download --Default is false, no download

- Return value --When downloaded, it will come back in binary

--Check here when you throw SQL and an empty one comes back.

- https://xxxx.looker.com/admin/queries --You can see the log because it looks like a mechanism like putting it in a queue and executing it. ――It seems that you can't understand that SQL is moss from the API.

It will come back in binary, so if you save it in binary, you will be able to use it.

fw = open('result.csv', 'wb')

fw.write(sdk.run_sql_query(result,"csv",True))

fw.close()

in conclusion

To be honest, I was wondering if it would be nice to have Looker execute SQL locally. Many people look at the dashboard, and if you want, you can connect BigQuery with pandas, so most of the merits of what I investigated this time do not feel like how to use the SDK. While using Looker's assets, for queries that are too complicated or change frequently to handle on Looker, get it with SQL via SDK like this → Build a mechanism to automatically rotate analysis I think it is meaningful.

References

Get authentication key

- https://dev.classmethod.jp/articles/looker-api-get-data/

Official description

- https://docs.looker.com/reference/api-and-integration/api-sdk

looker-open-source(github)

- https://github.com/looker-open-source/sdk-codegen

- https://github.com/looker-open-source/sdk-codegen/tree/master/python

Recommended Posts