[PYTHON] Data analysis environment centered on Datalab (+ GCP)

This entry is a sequel to the following two entries.

-Overview of Cloud Datalab -Good and regrettable parts of Cloud Datalab

Examination of analysis environment centered on Datalab

Regarding the data analysis environment, there are various ways of thinking regarding the number of analysts, the type of business of the company, the sense of scale, etc., and it is annoying every time I hear a story. I have a feeling for the future of Datalab, but in conclusion, unfortunately, Datalab alone does not seem to be functional enough. However, now that the new analysis technology called deep learning has become the de facto standard to some extent, I think it is meaningful to reorganize the analysis environment, so I will write it down.

Significance of investing in an analytical environment

Retty's case became a hot topic, and I myself found it helpful. Deep learning has become popular these days, so in practice it will settle in this direction. Our analysis environment is created in a similar direction.

Also, as seen in the social game industry a long time ago, I improved the game by data mining and made ○ billion yen, but it does not have as much impact, but Mr. Retty mentioned above recovery more than the investment seems to have been completed, and it seems that there is no point in investing in an analytical environment.

Assumed analysis environment requirements

As for the story from here, the following is assumed.

--There are multiple analysts (a reasonable number) --There are a wide variety of themes to analyze --It also requires resources such as GPU that are a little more expensive (for deep learning, etc.)

Data analysis environment configuration

I will write it along with the transition of the analysis environment.

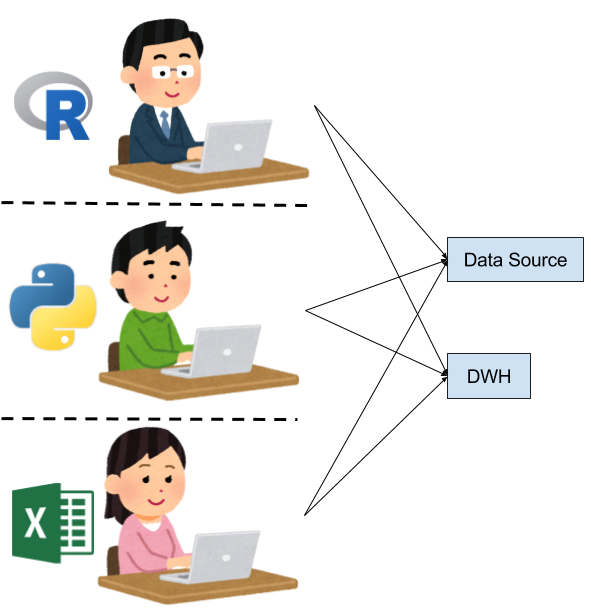

Each person builds an environment at hand

At the beginning of the analysis, it will look like this.

--Each person builds an analysis environment as needed ――The sources of data are also different, and it feels like each person picks up what they need for analysis. ――Since it is not clear what data you can use and what data you can use

I think that it is unavoidable if the number of people is small and the cost for analysis cannot be spent.

I want to align the data sources

As the next step, I think you want to have some data sources.

--Prepare shared storage, cloud storage, etc., and aggregate data sources there --Introduce commercial DWH, Hadoop, etc. to perform data processing and primary processing ――Each person brings the processing result to the analysis environment at hand and analyzes it.

As usual, the analysis environment itself seems to be different, but I think there are many points that have been improved by unifying the data sources. However, due to the lack of an analysis environment, it is expensive to perform analysis as a team, and each person remains at the level of independent analysis work.

I want to prepare an analysis environment

The next step is to collaborate on analytical work. At this stage, if the analysis environment is not complete, it will be difficult to divide the analysis work and do things like OJT, so there will be a need to prepare an analysis environment.

――In most cases, you will leave the installation procedure etc. in the document, and if you are new to the site, you will have to build your own analysis environment. --Using Jupyter to prepare Python versions, like ... ――The nice thing is, for example, you can provide a VM with an analysis environment built, or prepare an execution environment at the docker image level and share a notebook (ipynb) file.

Gradually, it will be an important phase to standardize and share the analysis environment from the engineer's point of view. Before the advent of deep learning, it can be said that a somewhat satisfactory analysis environment was created at this stage.

Problems so far: Fucking query problem

There are issues in operating it as an analysis environment with the configuration up to this point.

That is, because the data processing part (DWH, Hadoop / Hive, Redshift, etc.) is shared, queries that bother other people and the administrator (fucking queries) are created. The administrator of the DWH part needs to keep an eye on the fucking query and kill it.

However, from the analyst's point of view, if there are no clear rules, there is a tendency to proceed with data processing with queries as much as possible, and newly joined people will issue fucking queries without maliciousness and become apt. I will. The fucking query problem is generally a cat-and-mouse game and the perception that there is no fundamental solution.

I want to secure and share richer computational resources

Then, as a recent trend, there is a trend to introduce GPU in order to tackle deep learning properly. In general, GPU machines are a bit more expensive to procure than CPU machines, and are still not cheap and easy to use in the cloud. Also, instead of wanting a large amount of GPU resources, there is a tendency to introduce high-performance and several GPUs for analysis on a trial basis at best, and there is a case where the environment itself where several GPUs can be used is created on-premise. Seems to be most.

In that case, GPU machines are often shared, and in that case it seems that Docker images are often used. The same is true for Retty's case. Since deep learning requires a lot of data, it is natural to attach a large disk to the GPU machine and place it nearby.

Problem here: GPU borrowing problem

Of course, the GPU is shared, so exchanges such as borrowing the GPU and returning it will begin to occur in chats and the like. To make matters worse, deep learning takes time to learn, so once you borrow it, you can't easily return it. When it happens that the work that wants to use the GPU overlaps, the resources are exhausted immediately, and conversely, when it is not used, it is not used at all. Since the data is also on-premise, backup and capacity problems may occur.

So let's consider a workflow centered on Datalab

How does a Datalab-centric workflow fit in here? I think it will be for the following.

Admin problem on the DWH side (countermeasures for fucking query problems)

--Leave it to BigQuery and solve fucking queries with brute force

Separation of calculation resources (GPU borrowing problem countermeasure)

――You can stand up for those who use GCE --Resources that are not shared with others can be prepared immediately with a single command! --You can raise or lower the instance specifications as needed.

Data capacity finite problem (GPU borrowing problem countermeasure)

--Datalab has access to GCS, so capacity issues are likely to be virtually resolved

Various other

--Can work with your Google account! ――Since it can be restricted appropriately in cooperation with IAM, it seems to be relatively easy to manage.

Datalab all seems to work (I wanted to say)

So, Datalab, let's all use it! I'd like to say, but it collapsed because GPU Instant can not be used (laugh) It's regrettable, regrettable. Also, as some pointed out, Datalab also has Python2 problems.

There are still many issues to be solved, but I personally would like to pay attention to the data analysis environment on GCP centered on Datalab.

The story is covered with Cloud NEXT Extended [Away study session to be held on April 1](https:: //algyan.connpass.com/event/52494/).

Recommended Posts