[PYTHON] Since choreography is Wakaran, I will implement it and release the entire source code.

Choreography and orchestration

Engage each component of the system in the decision-making process for the workflow of business transactions, rather than relying on a central control point.

Reference: Choleography

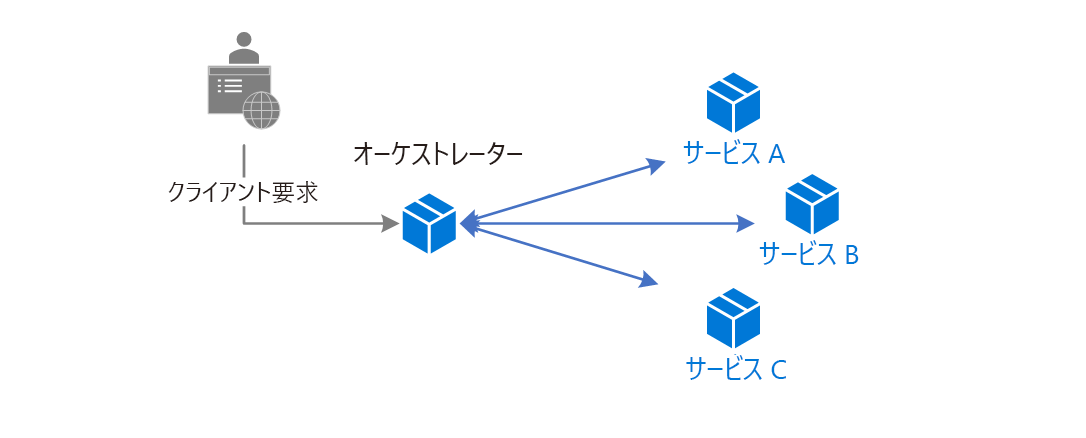

With a general architecture,

Reference: Choleography

There is a client request, which one server receives and sends commands to servers such as service A, service B, and service C. For example, when user registration is performed, user information is saved in the DB. Send a Welcome email to the user's email address. We will do the work. Such a system is called an orchestration, and the system that receives the client first is called an orchestrator. It's easy to imagine when you think of a music orchestra, but there is one conductor, who directs various musical instruments and makes sounds. It is an image such as.

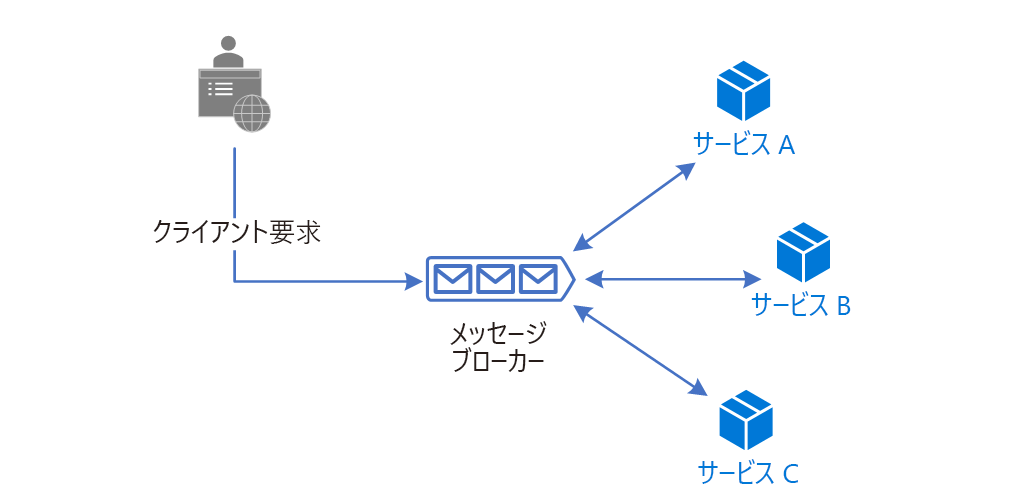

Reference: Choleography

Choreography, on the other hand, has a message broker, which contains event information. Service A, Service B, and Service C each subscribe only to the event they are interested in, and operate when that event is published. It has an architecture such as. For example, when the event "User registration" comes, the user registration service saves the user's information in the DB. Similarly, when a "user registration" event arrives, the email registration service will send a welcome email to the user.

After reading this far, did you come up with an implementation image of ** choreography? I didn't get it. I often see only the words, but I didn't see many reference implementations. So I made it myself. ** is this article.

Choreography doubts

- Can one message fire multiple events?

- Can I do one message queue (MQ)?

- What do you do with the response?

As mentioned above, in response to the event "User registration", there are two actions, "Persist in DB" and "Send Welcome mail". In this case, ** the event issuer wants to issue only one event. There is a request of **. For example, I would like to connect a new "registrant-only coupon issuing system". I think. Even if the number of such message subscribers increases, it is best if the issuer does not change. This is the content of 1. I think that we will extend the "user registration" mentioned earlier to create a "user management system". At that time, I would like to manage "user deletion" with a similar system. This means that ** two messages, "user registration" and "user deletion", will be sent to the same MQ. Can you handle it properly even in such a case? I'm curious about **. Do you need two MQs in such a case? Or is it just one MQ? The question is 2. If you register as a user, I think that json that is a set of user ID, name, and email address will be returned. However, when I look at the ** choreography system, there is an MQ in between. Isn't this unable to return a value? **I thought. In general, when throwing a message to an MQ, the message stays thrown and can it be persisted to the MQ or not? I only know about it. Therefore, it is difficult to receive the processed result. I think. So is that really impossible? Can you do it? I was wondering about 3.

Sample system

It's a very simple system, the "user management" system that I mentioned earlier.

--User information is saved when you register as a user --User information is destroyed when the user is deleted

It has only two functions.

But one day

--Send a welcome email when you register as a user

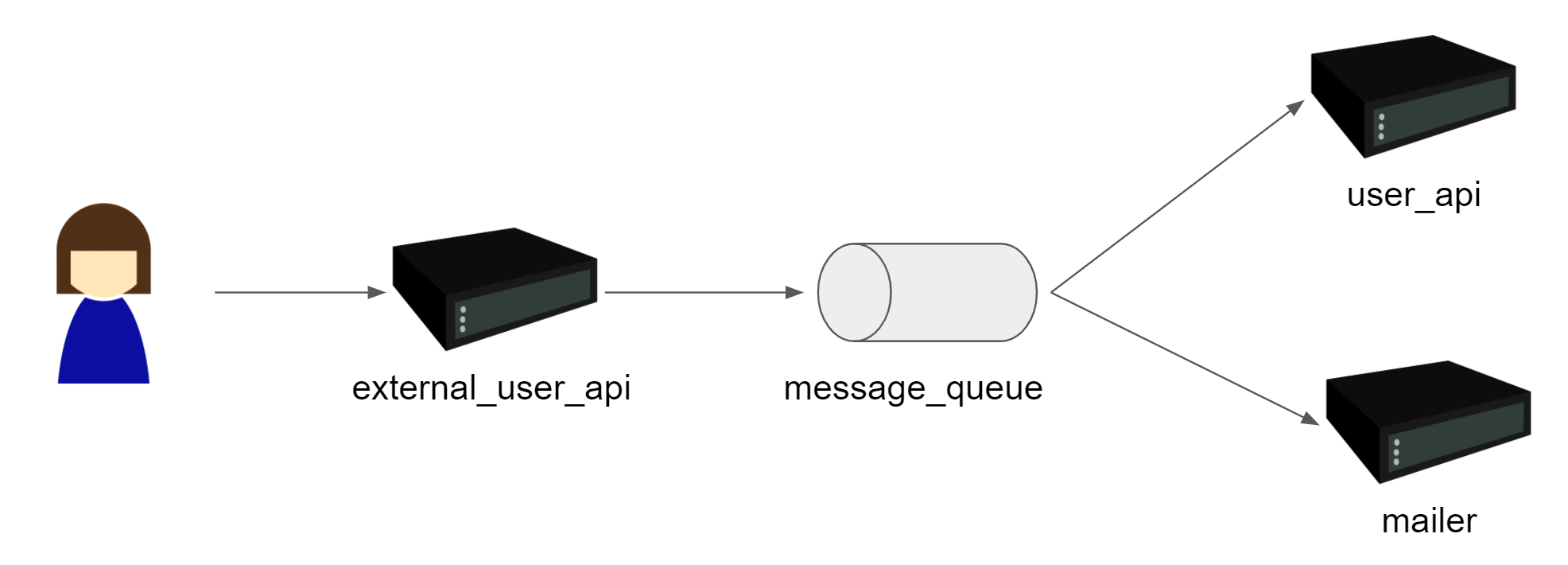

The specification was added. Therefore, I decided to introduce choreography into the architecture and configure it as follows.

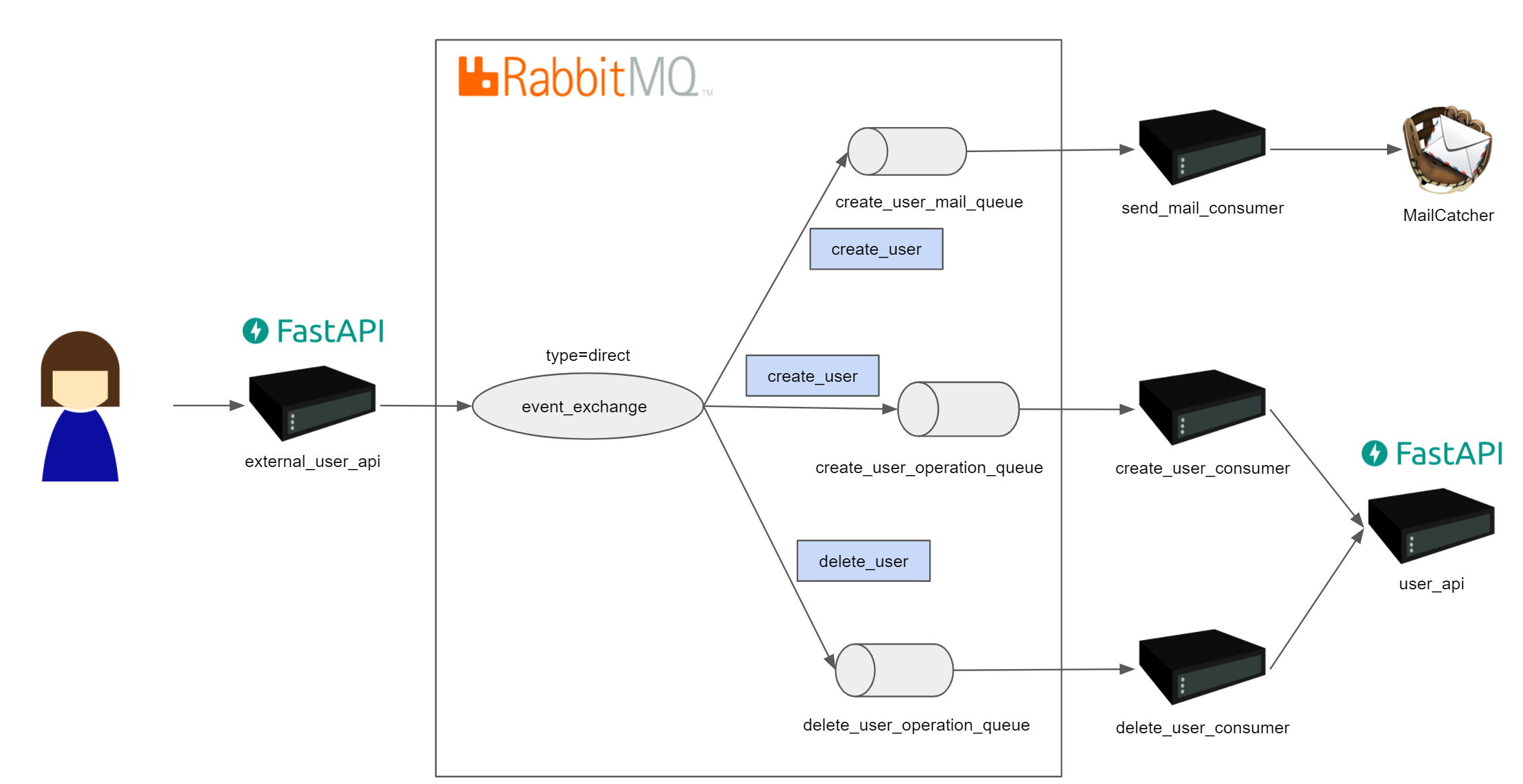

external_user_api primarily receives information from the user and sends that information to the message queue. The user_api and mailer respond to the content of the message by sending a welcome email or deleting the user. I assumed that.

Actually made system

Click here for the code https://github.com/kotauchisunsun/choreography_sample

The implementation is Python and the API framework is Fast API. MQ is RabbitMQ. The library that connects to MQ is recommended by the RabbitMQ formula pika. Also, MailCatcher to confirm the sending of the email.

Basically,

$ git checkout https://github.com/kotauchisunsun/choreography_sample.git

$ cd choreography_sample

$ docker-compose up

It is a haze that moves with.

Exchange and Routing

In general, MQ-based systems have three components.

Quote: Hello World!

A Publisher that publishes messages, a Consumer that subscribes to messages, and a Message Queuing that manages messages. In addition to that, RabbitMQ has functions called Exchange and routingKey.

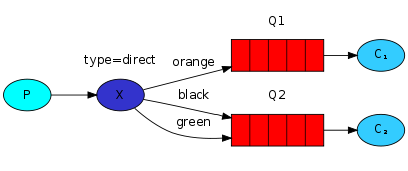

Quote: Routing

Here, the one indicated by X is Exchange, and the one indicated by orange, black, and green is the routingKey. Publisher sends the message with the routingKey specified along with the message. For example, if you publish a message to Exchange called X with a routingKey called orange, Exchange will route the message to Q1. Similarly, if you publish a message with a routingKey of black, it will send the message to Q2. This time, in order to realize choreography, by setting create_user and delete_user as routingKey, it is possible to route the message to each queue by publishing to one Exchange.

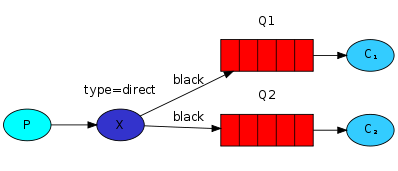

Quote: Routing

Also, as shown in the above figure, the routing Key of black is specified for Q1 and black is specified for Q2. In this case, one message sent to Exchange will be copied to each queue. For example, if the publisher publishes the message "hello" with the routingKey black, Q1 will contain the message "hello" and Q2 will also contain the message "hello". In this system, by specifying the routingKey called create_user in the two queues, the two operations of "user registration" and "sending Welcom mail" are realized in one Publish.

Response problem

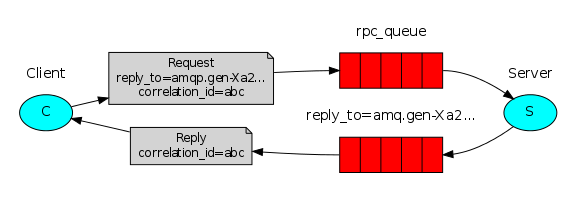

How do you get a response when using choreography? Speaking of which, a similar one is written in RPC of RabbitMQ.

Sends a message from the client to the server via a queue. After that, a queue for the response is prepared, the server sends the contents of the response to the queue, and the client reads the message. It is a mechanism. Speaking of course, it is natural, but can you actually do it? It's like that.

def on_response(self, ch, method, props, body):

if self.corr_id == props.correlation_id:

self.response = body

def call(self, n):

self.response = None

self.corr_id = str(uuid.uuid4())

self.channel.basic_publish(

exchange='',

routing_key='rpc_queue',

properties=pika.BasicProperties(

reply_to=self.callback_queue,

correlation_id=self.corr_id,

),

body=str(n))

while self.response is None:

self.connection.process_data_events()

return int(self.response)

To explain a part of the client side, the important part is the basic_publish part. In the contents of this properties, there is an attribute called reply_to, and callback_queue is loaded here. This is the response queue. Then self.connection.process_data_events () is called in a loop. When a message arrives, on_response rewrites self.response, exits the loop, parses it into an int, and returns it. It is a process called. DB such as MySQL does not create or delete the table itself very dynamically, but RabbitMQ can easily create and delete queues, so it seems that this kind of work can be done.

def on_request(ch, method, props, body):

n = int(body)

print(" [.] fib(%s)" % n)

response = fib(n)

ch.basic_publish(exchange='',

routing_key=props.reply_to,

properties=pika.BasicProperties(correlation_id = \

props.correlation_id),

body=str(response))

ch.basic_ack(delivery_tag=method.delivery_tag)

The server side is also easy. Again, the key is basic_publish, where routing_key is specified as props.reply_to. This is the reply_to specified on the publish side. In this way, when sending a message, it seems that RPC is realized by specifying the queue to return the response as properties.

I wrote so far, but it seems that I will not use it much. In the first place, when it comes to RPC requirements, there are alternative technologies such as gRPC, so it seems that there is not much merit. Why use MQ in the first place? If you go back to that, it takes time to process each one. For example, let's say you're receiving from a user via REST API and the back side is MQ RPC. If MQ takes a long time to process, the REST API is not something that can be provided to users, but an API that frequently times out. So, if MQ doesn't take long to process, isn't it good with REST API behind the scenes? It will be. So if you're going to use it for server-to-server communication instead of providing it directly to your users, isn't it good with gRPC? It will be.

I think the good thing about choreography is that the event issuer issues events unilaterally so that other services can work together. By doing so, even if the number of servers that cooperate with each other increases, I think it is convenient to be able to add functions without changing the event issuing side. If you receive the return value there, the number of responses will change each time the number of cooperative services increases, and the issuing side and the cooperative side will be tightly coupled. For that reason, in choreography, the event issuer should not receive a return value. I thought that was a better option.

What the customer really wanted

The problem with choreography is recovery in the event of a failure. With the system I made, if the mail system goes down, user registration is done, but welcome mail is not sent. Can occur. Also, if user_api goes down, a welcome email will be sent even though user registration has failed. An unclear situation can occur. And since I'm too obsessed with pure choreography and there's no response, the client doesn't even know if the user registration was successful.

In the end, such an architecture might have been better. I thought. The user_api is responsible for persisting to the database and publishing events. This will allow you to return a response. It also publishes events to MQ, so it is extensible. For example, if the MQ goes down, if the DB side creates a transaction and publishes it to the MQ inside, it will not be written to the DB. On the contrary, if a message is issued to MQ even though the DB fails to commit, the consumer side runs user_api to confirm the existence of the user and sends it if it exists. This method can prevent the behavior of sending a Welcome message to the user who failed to register.

Answers to questions

-

Can one message fire multiple events? -> You can. By specifying the same routingKey for multiple queues, multiple types of events can be fired by issuing a single message (user registration by create_user and sending Welcome email).

-

Can I do one message queue (MQ)? -> Multiple queues required. However, the message issuer can fire different events for one Exchange by changing the routingKey and publishing. (create_user and delete_user)

-

What do you do with the response? -> You can get the return value by specifying the queue to put the response as a property when issuing the message. However, it doesn't seem to be used much.

Impressions

** Overwhelmingly annoying **

This time, I prepared the environment with docker-compose, but there are 7 containers. Of these, RabbitMQ and MailCatcher are existing ones, so I have five containers I made. I don't want to write a Dockerfile for this kind of verification. If possible, I want to use only the existing ones. At first, I made only user_api. From there, let's make external_user_api to publish to MQ and modify user_api to subscribe to create_user. I thought. However, I realized that "in fact, considering the production operation, the api that was running as user_api will not change to subscribe to MQ?", Create_user_consumer was born. Then, create_user_consumer needs to hit the API of user_api, then put the tool chain of swagger, generate client from swagger, combine it, set the host name, and so on. It increased like a snowball. I also needed three Consumers to verify the question. In order to make the entire system loosely coupled, it was necessary to pass it from docker-compose to environment variables, so the cost of loosely coupled glue was uselessly high. The good point is that we selected easy-to-understand middleware. RabbitMQ has a Web UI that allows you to see the contents of queues, Exchange, and messages. In addition, MailCatcher also has a WebUI, so you can check what kind of email was sent. Also, since I was building the API with FastAPI, swagger's WebUI was attached, and I could easily verify the API from here, so the verification loop was very easy to turn. This time, it was a verification of choreography, but it was actually like studying RabbitMQ. Is Redis predominant for this purpose now? However, there are some troublesome stories when it comes to running these in the cloud, and there are many cases where recent servers are stateless or do not occur without a request (Heroku etc.), so it is not difficult to set up such a consumer. Wonder? I also think. I have to keep track of the infrastructure around which such consumers can be built. I thought it was a verification. This is not choreography! I have a better sample! Please let me know if anyone knows.

Recommended Posts